![[image of the Head of a GNU]](/graphics/gnu-head-sm.jpg)

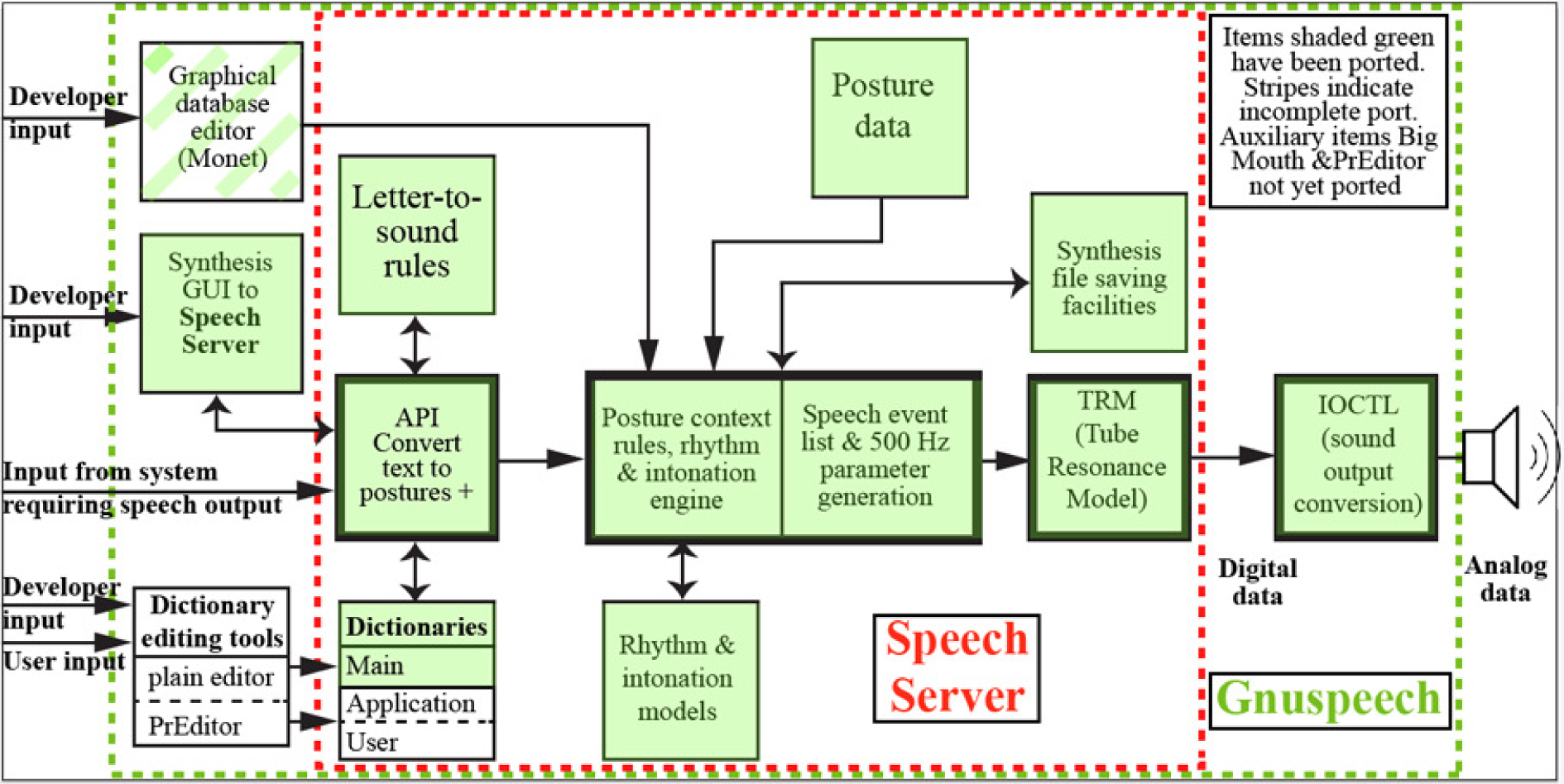

gnuspeech makes it easy to produce high quality computer speech output, design new language databases, and create controlled speech stimuli for psychophysical experiments. gnuspeechsa is a cross-platform module of gnuspeech that allows command line, or application-based speech output. The software has been released as two tarballs that are available in the project Downloads area of https://savannah.gnu.org/projects/gnuspeech. Those wishing to contribute to the project will find the OS X (gnuspeech) and CMAKE (gnuspeechsa) sources in the Git repository on that same page. The gnuspeech suite still lacks some of the database editing components (see the Overview diagram below) but is otherwise complete and working, allowing articulatory speech synthesis of English, with control of intonation and tempo, and the ability to view the parameter tracks and intonation contours generated. The intonation contours may be edited in various ways, as described in the Monet manual. Monet provides interactive access to the synthesis controls. TRAcT provides interactive access to the underlying tube resonance model that converts the parameters into sound by emulating the human vocal tract.

The suite of programs uses a true articulatory model of the vocal tract and incorporates models of English rhythm and intonation based on extensive research that sets a new standard for synthetic speech.

The original NeXT computer implementation is complete, and is available from the NeXT branch of the SVN repository linked above. The port to GNU/Linux under GNUStep, also in the SVN repository under the appropriate branch, provides English text-to-speech capability, but parts of the database creation tools are still in the process of being ported.

Credits for research and implementation of the gnuspeech system appear the section Thanks to those who have helped below. Some of the features of gnuspeech, with the tools that are part of the software suite, tools include:

It is a play on words. This is a new (g-nu) “event-based” approach to speech synthesis from text, that uses an accurate articulatory model rather than a formant-based approximation. It is also a GNU project, aimed at providing high quality text-to-speech output for GNU/Linux, Mac OS X, and other platforms. In addition, it provides comprehensive tools for psychophysical and linguistic experiments as well as for creating the databases for arbitrary languages.

The goal of the project is to create the best speech synthesis software on the planet.

The first official release has now been made, as of October 14th 2015. Additional material is available for GNUStep, Mac OS X and NeXT (NeXTSTEP 3.0), for anonymous download from the project SVN repository (https://savannah.gnu.org/projects/gnuspeech). All provide text-to-speech capability. For GNUStep and OS X the database creation and inspection tools (such as TRAcT) can be used as intended, but work remains to be done to complete the database creation components of Monet that are needed for psychophysical/linguistic experiments, and for setting up new languages. The most recent SVN Repository material has now been migrated to a Git Repository on the savannah site whilst still keeping the older material on ther SVN repository. These repositories also provide the source for project members who continue to work on development. New members are welcome.

It would be be a good idea for those interested in the work to join the mailing lis, to provide some feedback, ask questions, work on the project, and so on.

Helpers and users can join the project mailing list by visiting the subscription page (https://lists.gnu.org/mailman/listinfo/gnuspeech-contact), and send mail to the group. Offers of help receive special attention! :-)

The full project implementation history is described in a separate page (https://www.gnu.org/software/gnuspeech/project-history.html) to avoid overloading this one.

In summary, much of the core software has been ported to the Mac under OS/X, and GNU/Linux under GNUStep. All current sources and builds are currently in the Git repository, though older material, including the Gnu/Linux/GNUStep and NeXT implementations are only in the SVN repository. Speech may be produced from input text. The development facilities for managing and creating new language databases, or modifying the existing English database for text-to-speech are incomplete, but mainly require only the file-writing components. The Monet provides the tools needed for psychophysical and linguistic experiments. TRAcT provides direct access to the tube model.

gnuspeech is currently fully available as a NextSTEP 3.x version in the SVN repository along with the Gnu/Linux/GNUStep version, which is incomplete though functional. Passwords for the original NeXT version (user and developer) are available in the “private” file in the NeXT branch. Tarballs for the initial release versions of gnuspeech and gnuspeechsa are available from the Downloads area of the savannah project page (https://savannah.gnu.org/projects/gnuspeech).

The original NeXT User and Developer Kits are complete, but do not run under OS X or under GNUStep on GNU/Linux. They also suffer from the limitations of a slow machine, so that shorter TRM lengths (< ~15 cm) cannot be used in real time, though the software synthesis option allows this restriction to be avoided. Any password can be selected to activate the NeXT kits from the file “nextstep / trunk / priv / SerialNumbers” and choosing a password such as “bb976d4a” for User 26 or “ebe22748” for Dev 15 from the very large selection provided. In fact, you can use these passwords. But you need a NeXT computer, of course—try Black Hole, Inc. (https://www.blackholeinc.com) if you'd like one.

Developers should contact the authors/developers through the gnu project facilities (https://savannah.gnu.org/projects/gnuspeech). To join the project mailing list, you can go directly to the subscription page. (https://lists.gnu.org/mailman/listinfo/gnuspeech-contact). Papers and manuals are available on-line (see below).

A number of papers and manuals relevant to gnuspeech exist:

Some examples of the papers by other researchers that helped us in developing gnuspeech include:

but there are far too many to list them all. Further papers may be found in the citations incorporated in the relevant papers noted above and/or listed on David Hill's university web site (https://pages.cpsc.ucalgary.ca/~hill).

See the section on Manuals and papers

To contact the maintainers of gnuspeech, to report a bug, or to contribute fixes or improvements, to join the development team, or to join the gnuspeech mailing list, please visit the gnuspeech project page (https://savannah.gnu.org/projects/gnuspeech) and use the facilities provided. The mailing list can be accessed under the section “Communication Tools”. To help with the project work you can also contact Professor David Hill (hilld-at-ucalgary-dot-ca) directly.

The research that provides the foundation of the system was carried out in research departments in France, Sweden, Poland, and Canada and is ongoing. The original system was commercialised by a now-liquidated University of Calgary spin-off company—Trillium Sound Research Inc. All the software has subsequently been donated by its creators to the Free Software Foundation forming the basis of the GNU Project gnuspeech. It is freely available under a General Public Licence, as described herein.

Many people have contributed to the work, either directly on the project, or indirectly through relevant research. The latter appear in the citations to the papers referenced above. Of particular note are Perry Cook & Julius Smith (Center for Computer Research in Music and Acoustics) for the waveguide model and the DSP Music Kit), René Carré (at the Département Signal, École Nationale Supérieure des Télécommunications in Paris). Carré’s work was, in turn, based on work on formant sensitivity analysis by Gunnar Fant and his colleagues at the Speech Rechnology Lab of the Royal Institute of Technology in Stockholm, Sweden. The original gnuspeech system was created over several years from 1990 to 1995 by the University of Calgary technology-transfer spin-off company Trillium Sound Research Inc. founded by David Hill, Leonard Manzara and Craig Schock at Leonard's suggestion. The work then and since was mainly performed by the following:

Thanks guys! Dalmazio, Steve and Marcelo deserve a special measure of thanks for their most recent volunteer work.

Return to GNU's home page.

Please send FSF & GNU inquiries & questions to

David Hill is responsible for writing this gnuspeech page. Thanks to Steve Nygard for his helpful criticisms

Copyright (C) 2012, 2015 David R. Hill,

Verbatim copying and distribution of this article in its entirety or in part is permitted in any medium, provided this copyright notice is preserved and the source made clear.

Page originally created in the mists of time (2004?)

Last modified: Sun Oct 18 22:21:00 PDT 2015